Summary: Always check your numbers with smaller, simpler queries and figures. Use total sales as a reality check for comparison to sales queries. When creating models, compare performance to a simpler model. Don’t assume complexity equals accuracy. Be prepared to compare against existing “gold standard” models.

Baseline Summary Statistics

As an analyst, you need to be able to do a lot of things at the same time. Prioritize multiple projects, answer ad hoc questions, query multiple databases, navigate multiple software tools, and write-up your analysis. Throughout all of this you have to maintain accuracy and speed.

Smart analysts know that you need an analytical cheat sheet to help unload some of the mental burden.

As you progress in your analytical career, it’s important to memorize key stats like: number of customers, average marketing costs, customers acquired, year end sales, etc. However, you’re going to be encountering questions that you’ve never seen before.

If someone asks you “How many customers buy obscure product X and obscure product Y and what are their total sales for last year?” You are probably not going to have the answer on your cheat sheet or memorized. When you encounter this situation, you need to take a step back and run simple queries.

Let’ break down the “obscure x and y” question in terms of simple queries.

- How many customers bought X?

- How many customers bought Y?

- The smaller of these two numbers is now the upper limit of the combinations of the two.

- *How many customers are there in total?

- *What is the total sales for all of last year (regardless of products purchased)?

Think for a second about how these queries differ. Which query is simpler than the others?

…

…

…

Got it? Think in terms of the filters you would apply. The last two queries are actually simpler because you’re counting and adding everything up.

The last two are key queries to either run or know. By knowing the big picture numbers you can quickly check if your more granular numbers make sense. Imagine your “obscure product X” had $100,000 in sales last year. If your total sales for last year is only $1,000,000, does it make sense that an obscure product makes up 10% of sales? It would lead me to believe that my product X query is wrong.

It’s so easy to duplicate data in a SQL query or any tool that requires joining data together. Running these simpler queries lets you have baseline numbers to check against.

Baseline Models

Most of the time a more simple model can be just as powerful as a complex one.

An embarrassing number of models in the world are likely to be over-complicated. Analysts spend hours pouring through the data, engineering features, visualizing the data, and producing models. Sometimes it’s easy to miss the forest for the trees.

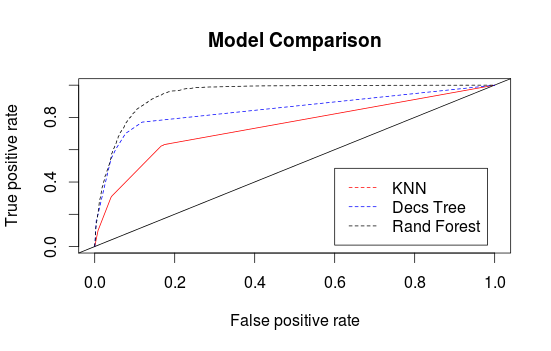

Take the example ROC Curve above. We’re comparing K-Nearest Neighbors, a Decision Tree, and a Random Forest model. That’s ordered from simplest to most complex.

Each model does give better performance, however, at what cost? This can’t be answered easily and it depends on the situation. Sometimes a simpler but weaker model is preferable.

- Does the time it takes to build the complicated model take time away from other higher-value projects?

- Does the complicated model take up more resources to use (Random Forests need to run through hundred of models)?

- Does the complicated model improve only on the tail ends of your predictions?

In Time Series analysis a Drift model just uses the last time period’s value and the average change in the time series to predict what’s next.

Predicting just the average or the most common class based on some segment of the data (called the One Rule Method) is often very accurate. It’s surprising how often a single variable or two can really separate your data well.

The simplest way of putting this idea into perspective is this: If you’re going to spend all this time tuning hyperparamters or engineering new features, why wouldn’t you spend ten minutes trying a simple algorithm?

Champion vs Challenger Models

The final concept you should always be aware of as you develop new models and new ideas is that your organization has probably already tried a lot of “new” ideas.

Since you’re in business, it’s rare for people in charge to be doing something that doesn’t work pretty well. Otherwise, they go out of business. You are challenged with beating the “gold standard” of your company.

When creating your own model, always compare it to the existing, gold standard methods. For example, if your marketing strategy is to always mail the top N% of customers who buy product X, you had better be able to beat that gold standard. You’ll find it’s often difficult to beat it! Your model is the challenger that has something to prove.

With this mindset, you can earn more credibility with your model development if you incorporate the existing gold standard into your model. It’s great to be able to say “We’re enhancing our existing way of doing things” rather than “We’re rewriting the playbook with all new ideas!” in most cases.

Bottom Line: A good analyst keeps an eye on the big picture by running less complicated queries and models to give reality checks. You’re up against many pre-existing and less complicated models already. Do your due diligence, check your numbers, and try multiple ideas.